Out-of-Spec Policy

Our main goal at RTINGS.com is to help you find the best product for your needs. This means we want our reviews to represent what most of you would get. This is why we buy our own units to test. We don't want the best-case scenario, like websites that receive cherry-picked units from manufacturers. Still, we also don't want an exceptionally bad unit if we get unlucky with the one we bought. Ideally, we would test a large sample size bought from a wide range of retailers, to calculate the deviation of each measurement. Unfortunately, this isn't a financially realistic solution for an independent company like ours.

Here are our formalized policies:

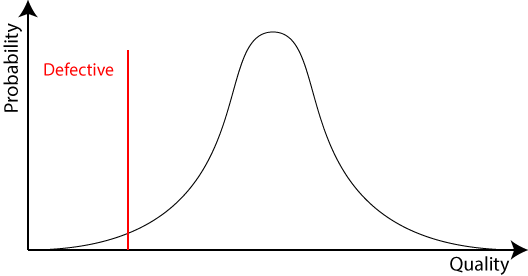

Defective Policy

We consider a unit defective when the product isn't usable. For example, a physically broken screen, a partially broken LED backlight, or a non-responding driver on a pair of headphones. When this happens, we don’t test the unit. We return it and buy a new one instead.

This policy isn’t new. We have been doing this for a few years. For example, in 2016, 2 of the 44 TVs and 1 of the 122 headphones we bought were defective.

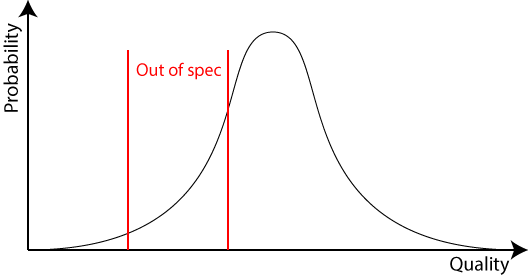

Out-Of-Spec Policy

We are leaving the responsibility to define what out-of-specifications are to the brand since they know how their product should perform, and there's no good way for us to know if our unit is worse than average.

As soon as a brand tells us the unit we tested is out of spec:

- We update the review to mention that our unit might not be representative.

- We buy another unit from a different retailer to improve the chances of getting a better one.

- We retest unit #2 through all the tests that might significantly improve and post all the results on the review page.

This should remove the out-of-ordinary bad units that we could potentially review. To prevent manufacturers from calling out-of-spec on all reviews forever until we get a sample (best-case) unit, we apply this rule:

If Unit #2 is significantly better than Unit #1

- Unit #2 is better, so the review is updated with unit #2's measurements.

Else

- Unit #2 isn't significantly better, so the brand loses its right to use the out-of-spec policy again for 1 year or unit until we review 10 more units from their brand in that product group, whichever comes first.

Conclusion

Our hope with this policy is to improve the accuracy of our measurements and to better represent what you can buy. We encourage brands to point out the units we have that are outliers according to their expectations. With this policy, you'll know that if a brand didn’t tell us that a unit is out of spec, they don’t think it performs significantly worse overall than what you can get at home.

However, this doesn't solve the issue of us getting a better-than-normal unit, but we have a few ideas on how we could address this in the future.