There are many ways to calibrate a monitor. The most common and accurate method employs a calibration tool: a calibrated tristimulus colorimeter. It helps you use the monitor's settings (hardware calibration) and generates a software-based calibration profile (ICC profile) to adjust the monitor's output to match an absolute reference. It isn't very accessible for most people since it requires an investment, often costing hundreds of dollars in equipment and software, or requires the contracting of a professional calibrator, which can cost a significant amount.

Fortunately, much of the calibration process can be done with reasonable accuracy using basic test patterns. While it might not be fit for critical work in a professional setting, it can substantially enhance the picture quality and provide a much more balanced image. Here's our guide on how to calibrate your monitor to help make sure colors are represented accurately.

Backlight Calibration

The easiest calibration setting is one that most people have probably already used. The 'Backlight' setting changes the amount of light your monitor outputs, effectively making it brighter. Changing the backlight level on your monitor doesn't alter the accuracy of your screen significantly, so feel free to set it to whatever looks good to you. It's sometimes called 'Brightness', which can be confusing. Generally, if there's a single setting called brightness, it refers to the backlight. If there's both a backlight and brightness setting, the backlight is the one you should be changing (as the brightness setting alters the gamma calibration, which we'll look at later on).

Picture Mode Calibration

When it comes to color calibration, the best place to start adjusting the colors when calibrating your monitor is usually the picture mode. These are the setting presets the monitor comes packaged with and usually alter most of the image settings. It's pretty important if you aren't using a colorimeter for calibration because it's otherwise very difficult to enhance your monitor's color accuracy.

For the monitors that we test, we measure each of the picture modes and pick the most accurate one as part of our "Pre-Calibration" test. In general, though, the best mode is usually the 'Standard' or 'Custom' preset.

sRGB mode

Some monitors also come with an "sRGB" picture mode, often referred to as an 'sRGB clamp'. It can be particularly beneficial in enhancing image accuracy on wide gamut monitors where the default color reproduction exceeds the sRGB color space, making some colors appear over-saturated. However, most monitors lock the rest of the calibration settings when this picture mode is enabled, which might bother some people.

Brightness And Contrast Calibration

The brightness and contrast settings change the way the screen displays tones at different brightness levels. These are easy options to adjust when calibrating your screen without a dedicated calibration tool, as most of the job can be done fairly accurately while simply displaying different gradient patterns.

Brightness

The brightness setting affects the way the monitor handles darker colors. If it's set too high, blacks will look gray, and the image will have less contrast. If it's set too low, the blacks will get "crushed". Crushing means that instead of showing distinct near-black steps of grays, the monitor will instead show them as pure black. It can give the image a very high contrast look at first glance, but it loses a significant amount of detail.

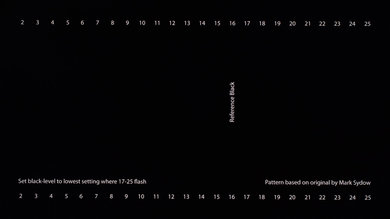

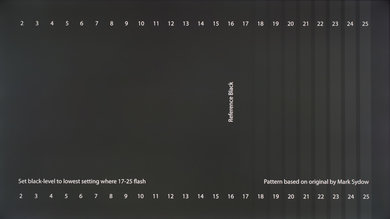

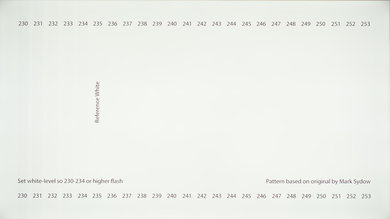

When calibrating your monitor, the best way to adjust the brightness is by using a near-black gradient test pattern like the one above. Raise or lower your brightness setting until the 17th step disappears completely, then go back one step to have it be visible again.

Some monitors have a 'Black adjust' or 'Black boost' setting that lets you adjust the black level. You can use it to make blacks look darker, but since you can't make blacks look darker than what the display is capable of, it ends up crushing blacks. Some gamers use it to make blacks look lighter, making it easier to see objects in dark scenes, but it's at the cost of image accuracy. It's best to leave this setting at its default.

Most graphics card software applications have a dynamic range setting that lets you toggle between limited and full RGB range. A full RGB range means that the image is displayed using all 255 values, with 0 being absolute black and 255 being absolute white. It's the default range for sRGB and the recommended setting for most modern LCD monitors. The limited range (16 - 235) is mainly used for TVs as most movies and TV shows are mastered in the limited range. In short, you have to match the source and the display as forcing your full-range monitor to display a limited RGB range makes the image look washed out, and forcing a limited-range display like a TV to show a full RGB range crushes blacks. The pattern we use is for calibrating a full-range display.

Contrast

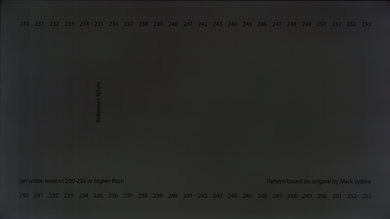

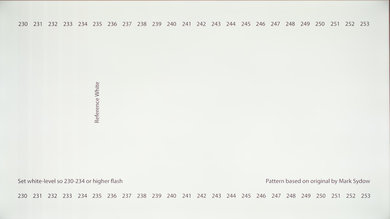

The contrast setting is very similar to the brightness setting, but it affects the brighter parts of the image rather than the darker parts. Much like brightness, setting it too high will cause brighter images to "clip," which is similar to crushing. Setting it too low will darken the image and reduce contrast.

Just like when calibrating for the brightness, adjust the contrast until steps up to 234 show some visible detail. The last few steps should be very faint, so it might take some trial and error.

Sharpness Calibration

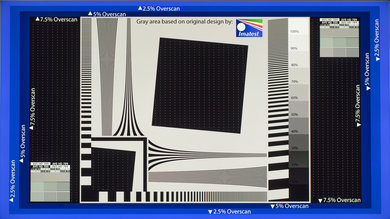

Sharpness is one of the easiest settings to adjust, and generally, the default tends to be fairly accurate. Adjusting the sharpness changes the look of the edges of shapes that appear on-screen. Having it too low will give you a blurry image, while setting it too high will give the picture an odd look with strange-looking edges. It'll also usually cause close, narrow lines to blend and produce a moiré effect.

The simplest way to calibrate this aspect if you aren't pleased with the default is to set it at max, then lower it until no strange pattern forms between the lines and shapes of the test image.

Color Temperature Calibration

The color temperature adjusts the temperature of the overall picture. A cooler temperature gives a blue tint, while a warmer temperature gives a yellow or orange tint. Think of it as the tone of the light outside at various times of the day. When the sun is shining bright at noon, the clouds and skies look almost pearl white without a distinct yellow. However, the light is yellow in the morning and evening as the sun rises and sets, and at night, white objects look blue when everything is lit by moonlight. We recommend a 6500k color temperature, which is the standard for most screen calibrations and is equivalent to midday light (also called Illuminant D65). It's generally on the warmer side of most monitors' scales. Some people find it too yellow, so feel free to adjust it to your preference.

White Balance Calibration

The white balance refers to the balance of colors across different shades of grey. An absolute white or grey has equal amounts of every color, with only the luminance distinguishing them.

Unfortunately, it isn't possible to adjust these settings with any form of accuracy without the necessary equipment. In general, we recommend most people keep these settings at their defaults as they can easily make things worse. Even copying our settings made using a colorimeter isn't recommended since these values will most likely be different across different units of the same monitor.

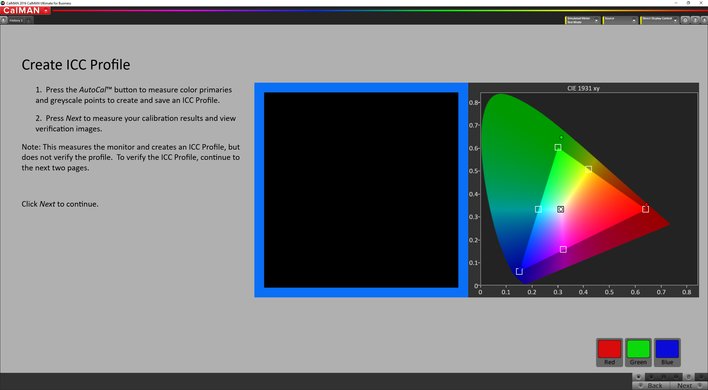

ICC Profiles Calibration

If you do calibrate your monitor using a tool, your calibration software will likely create a calibration profile that ends in .icm. This is an ICC profile, effectively a reference table that your computer's programs can use to display content accurately on your screen. In general, Apple's macOS is better at handling ICC profiles, whereas Windows and Windows applications tend to be quite inconsistent with how and when they're used. Generally speaking, hardware calibration yields better results, but since most monitors don't allow for full calibration using the OSD settings, ICC profiles are essential to getting the best possible image accuracy.

Much like the white balance, we don't recommend you use an ICC profile built on another monitor for your own, even if it's an identical model. The variance in accuracy between units tends to be quite distinct, so the correction that these profiles apply might not necessarily match what your monitor needs.

Motion Settings

While usually less problematic than on TVs, there are a few settings that you can adjust to alter the quality of the motion produced by the screen.

Overdrive

The overdrive setting adjusts the speed at which the monitor's pixels switch from showing one thing to another. Usually, the default setting tends to be optimal, but you might prefer setting it higher or lower. A too strong overdrive setting will cause the pixels to overshoot, which creates a strange-looking inverted ghost following moving objects on-screen. A too low setting will create more motion blur, with longer trails following moving objects. You can learn more about overdrive in our motion blur article.

G-SYNC and FreeSync

G-SYNC and FreeSync are variable refresh rate technologies that allow the monitor to synchronize its refresh rate with the framerate of the input device automatically. It's a great feature that reduces stuttering and generally makes for a more fluid experience. If your monitor supports it, we generally recommend keeping it on at all times unless you encounter bugs with certain games. G-SYNC specifically includes a "variable overdrive" feature that automatically adjusts the overdrive setting according to the game's frame rate. You can learn more about these technologies in our G-SYNC vs FreeSync article.

Refresh Rate

The refresh rate of your monitor refers to the frequency at which it updates what is shown on-screen every second. The standard refresh rate found on most monitors is 60Hz, but some screens, usually gaming-oriented, support upwards of 360Hz. You should almost always set it to as high as possible. You can learn more about refresh rates and our related tests in our refresh rate article.

Additional Calibration Settings

Game Mode

Some monitors also feature a 'Game Mode' (sometimes called 'low input lag mode'). We recommend using this feature, as it usually lowers the monitor's input lag without altering the image quality, making it more responsive.

Low Blue Light

Similar to modern mobile devices like smartphones and tablets, many monitors have a blue light filter that can help reduce eye strain. It's often called 'Low Blue Light' or 'Reader Mode,' and it essentially reduces the amount of blue light emitted by the screen, giving it an amber shade. It's said to be less fatiguing on the eyes, especially at night. Most desktop operating systems have this feature built-in: 'Night Mode' on Windows, 'Night Shift' on macOS, and 'Night Light' on Chrome OS. There are also third-party apps that can do the same. We recommend leaving this off when calibrating your monitor.

Eco Mode

Many monitors come out of the box with a series of energy-saving features enabled. This generally alters the picture quality in undesirable ways and are better kept off. Usually, monitors will also have a dedicated "eco" picture mode that enables these features. If you would like to reduce the energy consumption of your monitor, we recommend reducing your backlight setting instead. Simply turning off your monitor every time you step away from your desk also greatly reduces idle consumption. It's best to leave this setting off when calibrating the monitor.

Conclusion

Unless you have the equipment to perform a more thorough calibration, calibrating a monitor by eye is largely a trial and error process, so it may take a few tries before you get the results that you're looking for. Fortunately, most monitors have a reset feature to discard any changes and bring the settings back to their defaults if you end up with worse image quality. While it's generally recommended for content creators to calibrate their displays once a month because accuracy can drift over time due to panel degradation, most people can probably get by for much longer in between each calibration.