- 5.0%1080p @ 60Hz

- 5.0%1080p @ 120Hz

- 10.0%1080p @ Max Refresh Rate

- 10.0%4k @ 60Hz

- 10.0%4k @ 60Hz @ 4:4:4

- 20.0%4k @ 120Hz

- 40.0%4k @ Max Refresh Rate

Input lag is the amount of time it takes for your TV to display a signal on the screen from when the source sends it. It's especially important for playing reaction-based video games because you want the lowest input lag possible for a responsive gaming experience. Low input lag tends to come at the cost of less image processing on TVs, which is why there are specific Game Modes for low input lag. Even though TVs aren't as good as monitors in this regard, technology is slowly catching up.

We measure the input lag using a specialized tool, and we test for it at various combinations of refresh rate and resolution to test for a variety of real-world conditions.

Learn more about input lag on monitors and projectors.

Test results

Test Methodology Coverage

Our input lag testing hasn't really changed much over the years. The specific formats we test have changed as TVs support new resolutions and new refresh rates, but the test itself is done the same way. On the other hand, our scoring has changed over the years; as TV technology improves, our expectations have changed as well, so a high-end TV today won't perform the same as a high-end TV from eight years ago. As such, any TV tested on version 1.6 or later is directly comparable, but if you're looking at TVs from before 1.6, you can only compare the input lag measurements themselves, not the scores. Learn how our test benches and scoring system work.

Our latest test bench update, version 2.0, removed tests for 1440p resolutions entirely. Instead of running a third refresh rate test at a fixed 144Hz refresh rate, we've replaced that test with a max refresh rate test since some TVs now support much higher refresh rates.

| 1.6 | 1.7 | 1.8 | 1.9 | 1.10 | 1.11 | 2.0 | |

|---|---|---|---|---|---|---|---|

| 1080p @ 60Hz, 120Hz | ✅ | ✅ | ✅ | ✅ | ✅ | ✅ | ✅ |

| 1080p @ 144Hz | ❌ | ✅ | ✅ | ✅ | ✅ | ✅ | ❌ |

| 1080p @ Max Refresh Rate | ❌ | ❌ | ❌ | ❌ | ❌ | ❌ | ✅ |

| 1440p @ 60Hz, 120Hz | ✅ | ✅ | ✅ | ✅ | ✅ | ✅ | ❌ |

| 1440p @ 144Hz | ❌ | ✅ | ✅ | ✅ | ✅ | ✅ | ❌ |

| 4k @ 60Hz, 120Hz | ✅ | ✅ | ✅ | ✅ | ✅ | ✅ | ✅ |

| 4k @ 144Hz | ❌ | ✅ | ✅ | ✅ | ✅ | ✅ | ❌ |

| 4k @ Max Refresh Rate | ❌ | ❌ | ❌ | ❌ | ❌ | ❌ | ✅ |

When It Matters

Input lag matters the most when playing video games, either on a console or on a PC. Quick reflexes are key to fast-paced games like fighting or FPS games. Lower input lag can mean the difference between a well-timed reaction for a win or a late response that results in a loss. It doesn't matter for watching movies, though, so you have nothing to worry about unless you're a gamer. You might notice some delay when scrolling through your smart TV's menu, but it's rarely an issue for most people.

Our Tests

Now, let's talk about how we measure the input lag. It's a rather simple test because everything is done using our dedicated photodiode tool and special software that we developed in-house. We start by placing the photodiode tool at the center of the screen because that's where it records the data in the middle of the refresh rate cycle, so it skews the results to the beginning or end of the cycle. We connect our test PC to the tool and the TV. The tool flashes a white square on the screen and records the time it takes until the screen starts to change the white square; this is an input lag measurement. It stops the measurement the moment the pixels start to change color, so we don't account for the response time during our testing. It records multiple data points, and our software records an average of all the measurements, not considering any outliers.

When a TV displays a new image, it progressively displays it on the screen from top to bottom, so the image first appears at the top. As we have the photodiode tool placed in the middle, it records the image when it's halfway through its refresh rate cycle. On a 120Hz TV, it displays 120 images every second, so every image takes 8.33 ms to be displayed on the screen. Since we have the tool in the middle of the screen, we're measuring it halfway through the cycle, so it takes 4.17 ms to get there; this is the minimum input lag we can measure on a 120Hz TV. If we measure an input lag of 5.17 ms, then in reality, it's only taking an extra millisecond of lag to appear on the screen. For a 60Hz TV, the minimum is 8.33 ms.

The process is the same for all of our input lag measurements. We take multiple measurements across a range of resolutions and refresh rates, with a few extra tests for specific modes. Unless otherwise noted, all of these tests are done in the TV's lowest input-lag mode, usually called "Game" mode.

1080p @ 60Hz

This test measures the input lag of 1080p signals with a 60Hz refresh rate. This is especially important for older console games (like the PS4 or Xbox One) or PC gamers who play with a lower resolution at 60Hz.

1080p @ 60Hz Outside Game Mode

We repeat the same process as the test above but with Game Mode disabled. This is to show the difference between being in and out of Game Mode. High input lag in this mode could be a problem when watching movies or shows, as the interface will feel sluggish if it's too high. This can also cause audio-video sync issues when watching movies from an external source.

1080p @ 120Hz

Next, we measure the input lag at a lower 1080p resolution but a high 120Hz refresh rate. Although this format has become less popular in recent years, some gamers still choose it when gaming from recent consoles, as more games support a high refresh rate signal with a lower resolution.

1080p @ 144Hz

This number only matters if you plan on using your TV for PC gaming, as consoles don't support 144Hz refresh rates.

4k @ 60Hz

The 4k @ 60Hz input lag is probably the most important result for most console gamers. Even with the latest consoles, like the PS5 Pro and Xbox Series X, the majority of games are limited to 4k @ 60Hz in their highest-quality performance modes.

4k @ 60Hz @ 4:4:4

This test is important for people who want to use the TV as a PC monitor. Chroma 4:4:4 is an uncompressed video format, which is necessary if you want proper text clarity from a PC. We want to know how much delay is added, but for nearly all of our TVs, it doesn't add any delay at all compared to the 4k @ 60Hz input lag.

4k @ 60Hz Outside Game Mode

Like with 1080p @ 60Hz Outside Game Mode, we measure the input lag outside of Game Mode in 4k. Since most TVs have a native 4k resolution, this number is more important than the 1080p lag while you're scrolling through the menus.

4k @ 60Hz With Interpolation

Motion interpolation is an image processing technique that increases the frame rate to a higher one, like if you want to increase a 30 fps video up to 60 fps. However, for most TVs, you need to disable the Game Mode to enable the motion interpolation setting, as only Samsung offers motion interpolation in Game Mode. As such, most TVs will have a high input lag with motion interpolation. Also, we measure this with the motion interpolation settings at their highest because we want to see how the input lag will increase at the strongest, like a worst-case scenario.

4k @ 120Hz

This test is important if you're a gamer with an HDMI 2.1 graphics card or a recent console like the Xbox Series S|X, PS5, or PS5 Pro. We use our HDMI 2.1 PC with an NVIDIA RTX 3070 graphics card for this test because we need an HDMI 2.1 source to test it.

4k @ 144Hz

This test is only important if you're a PC gamer with a very high-end graphics card from the last few years. Consoles don't support 144Hz, and very few TVs support this format, so for most TVs we test, it'll be N/A.

8k @ 60Hz

This test is only important if you have an 8k TV and your graphics card can output 8k content at 60 fps.

Additional Information

Why there's input lag on TVs

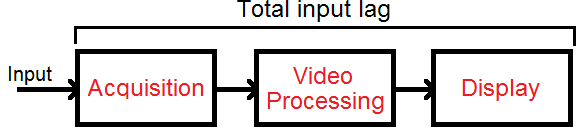

Before we get into the details of how we test, let's first talk about the causes of input lag. Three main factors contribute to the input lag on the TV: acquiring the source image, processing the image, and displaying it.

Acquisition of the Image

The longer it takes for the TV to receive the source image, the more input lag there will be. With modern digital TVs, using an HDMI cable will allow you to minimize the acquisition time, as the image will transfer from the source to the TV almost instantly. This phase of the input lag is rarely an issue on modern TVs, as it was more of an issue in the past with analog connections, like from early gaming consoles.

Video Processing

Once the image is in a format understandable by the video processor, it will apply at least some processing to alter the image in some way. A few examples:

- Adjusting the colors and brightness

- Interpolating the picture to match the TV's refresh rate

- Scaling it (like 720p to 1080p, or 1080p to 4k)

- Adding variable refresh rate technology

The time this step takes is affected by the speed of the video processor and the amount of processing needed. Though you can't control the processor's speed, you can exercise some control over how many operations it needs to do by enabling and disabling settings. Only more demanding video processing settings, like motion interpolation, will usually add input lag, while others, like the brightness, won't.

Displaying the Image

Once the television has processed the image, it's ready to be displayed on the screen, and the processor sends the video to the screen. However, the screen can't make it appear instantly, and the amount of time it takes to appear depends on the technology and the panel. Unfortunately, there's no way to improve or control the amount of time needed in this part, as it changes from TV to TV. However, this is different from the response time, which is the amount of time it takes for the pixels to change colors, and affects motion.

When do we start to notice a delay?

Most people will only notice delays when the TV is out of Game Mode, but some gamers might be more sensitive to input lag even in Game Mode. The input lag of the TV isn't the absolute lag of your entire setup; there's still your PC/console and your keyboard/controller. Every device adds a bit of delay, and the TV is just one piece in a line of electronics that we use while gaming. Check out this input lag simulator to know how much lag you're sensitive to. You can simulate what it's like to add a certain amount of lag, but this tool is relative to your current setup's lag, so even if you set it to 0 ms, there's still the default delay.

Other Notes and Related Settings

- The Game Mode setting is the most important setting to ensure you get the lowest input lag possible. This varies between brands; some have Game Mode as its own setting that you can enable within any picture mode, while others have a 'Game' picture mode. Go through the settings on your TV to see which it is. You'll know you have the right setting when the screen goes black for a second because that's the TV putting itself into Game Mode.

- On some TVs, if you're using it as a PC monitor, you must go into PC Mode to get low input lag.

- Many TVs have an Auto Low Latency Mode feature that automatically switches the TV into Game Mode when you launch a game from a compatible device. Often, you need to enable a certain setting for it to work.

- Some peripherals like Bluetooth mice or keyboards add lag because Bluetooth connections have inherent lag, but those are rarely used with TVs anyway.

Conclusion

Input lag is the time it takes a TV to display an image on the screen from when it first receives the signals. It's important to have low input lag for gaming, and while high input lag may be noticeable if you're scrolling through Netflix or other apps, it's not as important for that use. We test for input lag using a special tool and measure the input lag at different resolutions and refresh rates and with different settings enabled to see how changing the signal type can affect the input lag.