A TV's refresh rate is simply the number of times the screen refreshes every second. There are TVs with many different refresh rates to choose from, but two of the most common are 60Hz and 120Hz. TVs with a 120Hz refresh rate or higher offer multiple advantages over 60Hz ones, especially when it comes to motion, and you need a 120Hz TV to take full advantage of the latest gaming consoles. In this article, we'll break down some of the advantages of going with a higher refresh rate, so you can choose the best TV for your needs. If you're ready to start shopping, check out the best 120Hz TVs.

What Is Refresh Rate?

Although you don't notice it happening, your TV's screen is actually constantly refreshing itself and drawing a new image, even if nothing is changing on the screen. How many times that cycle happens per second is referred to as a refresh rate, and it's measured in Hertz (Hz). Unlike many other physical attributes of a display, like the resolution, the refresh rate isn't a physical characteristic of the panel itself, and all TVs actually support multiple refresh rates. It's up to the TV manufacturer to decide which refresh rates each model supports.

When It Matters

If you're trying to choose between getting an entry-level 60Hz TV or a more expensive 120Hz one, knowing just when and where it matters is crucial to making an informed buying decision. Below, we'll break down the key benefits of upgrading to a 120Hz TV. As most 120Hz TVs are higher-end models than 60Hz ones, there are other benefits not directly affected by the refresh rate itself, so this comparison will simply focus on the benefits of a higher refresh rate.

Motion Clarity

A faster refresh rate helps improve the appearance of motion, but it's not the only important factor. Looking at the example pursuit photos above, taken from the TCL QM8K, you can see that the RTINGS text is significantly clearer on the 120Hz image. There's actually very little difference in the TV's response time; the 120Hz image is clearer as there's less persistence blur. Both images are still a bit blurry, though. That's because although a high refresh rate reduces the amount of persistence blur you see, it doesn't necessarily reduce ghosting, as a TV's response time plays a greater role in that aspect of motion.

Here you can see two TVs placed side by side: a 60Hz model and a 120Hz model, with similar response times. We filmed these TVs in slow motion to easily compare each individual frame.

Judder

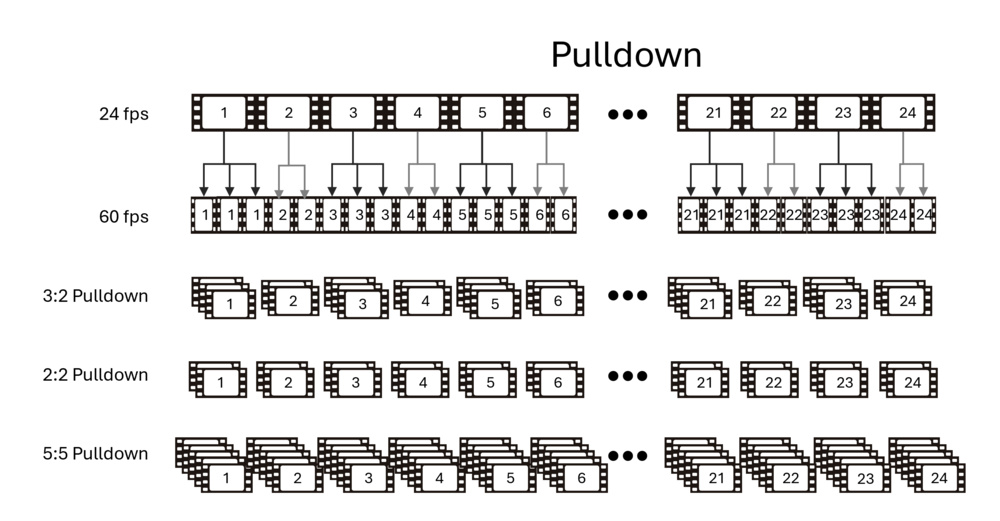

One of the biggest advantages of a 120Hz TV is the ability to play back content that is meant to be displayed at 24 fps, which is often found in movies. TVs with a 120Hz refresh rate can display 24p content perfectly by simply displaying each frame multiple times, usually either four (meaning the TV is actually dropping down to 96Hz) or five. Some 60Hz TVs, especially those with a variable refresh rate (VRR) feature, can do this too, by dropping their refresh rate to 48Hz instead of 60, so they simply double each frame.

A few 60Hz TVs can't do that, though, and they have trouble removing 24p judder. To display this type of content, a technique known as a 3:2 pulldown is used. Basically, 12 of the 24 frames repeat three times, while the other 12 repeat twice, totaling 60 frames. This means that some frames are displayed on the screen longer than others. Not everybody notices this, but it causes some scenes, notably panning shots, to appear juddery.

Gaming

Gaming at a higher refresh rate brings multiple benefits. As mentioned above, a higher refresh rate reduces the amount of persistence blur that you'll see in games, resulting in a much smoother, more responsive gaming experience. It also reduces the amount of input lag, so again, games will feel more responsive.

One other benefit that's not always obvious is if you're using a device that supports a variable refresh rate, or VRR. TVs that can't go above 60Hz are always locked into a fairly narrow VRR range, which means if the frame rate drops below 48 fps, for example, then they can't make use of technology like Low Framerate Compensation (LFC), and you'll see tearing. TVs that operate at 120Hz typically have a much wider VRR range, and they can take advantage of LFC to keep the screen tear-free even if the framerate drops below 48 fps.

Most modern game consoles support 120 fps, including the Xbox Series X, the Nintendo Switch 2, and the PS5, so you need a 120Hz TV to take full advantage of those consoles. PCs have supported 120 fps and even higher for many years, and most modern graphics cards are more than powerful enough to take full advantage of this. The benefits of a 120Hz TV for gaming are very clear, and unless you're only gaming on previous-gen or retro devices, you're definitely better off getting a 120Hz TV over a 60Hz one.

When using the motion interpolation feature

Another place where 120Hz is useful is if you enjoy the motion interpolation feature found on TVs (also known as the Soap Opera Effect). It allows the TV to generate intermediate frames between the real frames sent from your source, increasing the frame rate to match up to the refresh rate. Most TVs have this feature; a 60Hz TV can interpolate 30 fps content, while a 120Hz TV can interpolate 30 and 60 fps content, like sports and some shows. This is why a 120Hz TV is an advantage over 60Hz, since it can interpolate more types of content.

Fake refresh rate

TV companies will often market their refresh rates in ways to make it seem like it's higher than it actually is. A company like Samsung uses the term 'Motion Rate'; the Motion Rate on a 60Hz TV is 120, while a 120Hz model has a Motion Rate of 240; they effectively double the refresh rate to come up with this number, and there's no real explanation as to why it's marketed like that. LG uses 'TruMotion,' Vizio has 'Effective Refresh Rate,' and Sony has two terms: 'MotionFlow XR' and 'X-Motion Clarity.' These marketing numbers don't really mean anything, and you need to check the TV's specs to find the real refresh rate.

Conclusion

A refresh rate defines how often the screen refreshes itself every second. Although we can't see it, the TV draws a new image from the source every few milliseconds. Generally, a higher refresh rate TV results in better motion handling, but it's not always the case, as there are other factors that come into play with motion. The benefits of a 120Hz TV are clear, though, and most people are better off spending a bit more to get a TV that supports a 120Hz refresh rate.