Input lag on a monitor is the time it takes the monitor to process the signal sent and for the image to start appearing on screen. Most monitors have low enough input lag that you won't notice any delay during regular desktop use, but it's even more important for competitive gamers to achieve the lowest input lag possible. We test the input lag by using a specialized tool, and we test for it at its native resolution at different refresh rates.

We test the input lag on monitors the same way as TVs, but the testing on monitors is more simplified as we do more tests with different resolutions and settings on TVs. Learn about the input lag of TVs and projectors.

Test results

When It Matters

When you're using a monitor, you want your actions to appear on the screen almost instantly, whether you're typing, clicking through websites, or gaming. If you have high input lag, you'll notice a delay from the time you type something on your keyboard or when you move your mouse to when it appears on the screen, and this can make the monitor almost unusable.

For gamers, low input lag is even more important because it can be the difference between winning and losing in games. A monitor's input lag isn't the only factor in the total amount of input lag because there's also delay caused by your keyboard/mouse, PC, and internet connection. However, having a monitor with low input lag is one of the first steps in ensuring you get a responsive gaming experience.

When does the input lag become noticeable?

Any monitor adds at least a few milliseconds of input lag, but most of the time, it's small enough that you won't notice it at all. There are some cases where the input lag increases so much to the point where it becomes noticeable, but that's very rare and may not necessarily only be caused by the monitor. Your peripherals, like keyboards and mice, add more latency than the monitor, so if you notice any delay, it's likely because of those and not your screen.

There's no definitive amount of input lag when people will start noticing it because everyone is different. A good estimate of around 30 ms is when it starts to become noticeable, but even a delay of 20 ms can be problematic for reaction-based games. You can try this tool that adds lag to simulate the difference between high and low input lag. You can use it to estimate how much input lag bothers you, but keep in mind this tool is relative and adds lag to the latency you already have.

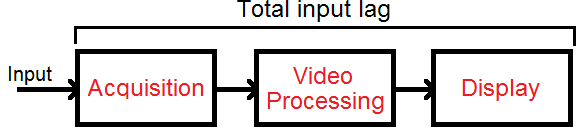

There are three main reasons why there's input lag during computer use, and it isn't just the monitor that has input lag. There's the acquisition of the image, the processing, and finally actually displaying it.

Acquisition Of The Image

The acquisition of the image has to do with the source and not with the monitor. The more time it takes for the monitor to receive the source image, the more input lag there'll be. This has never really been an issue with PCs since previous analog signals were virtually instant, and current digital interfaces like DisplayPort and HDMI have next to no inherent latency. However, some devices like wireless mice or keyboards may add delay. Bluetooth connections especially add latency, so if you want the lowest latency possible in the video acquisition phase, you should use a wired mouse or keyboard or get something wireless with very low latency.

Video Processing

Once the image is in a format that the video's processor understands, it will apply at least some processing to alter the image somehow. A few examples:

- Adding overlays (like custom crosshairs), although the delay here is minimal

- Adjusting the frame rate, like using motion interpolation or judder removal, but it's very rare on monitors

- Scaling it (like 720p to 1080p, or 1080p to UHD), but this is more common on TVs

The time this step takes is affected by the speed of the video processor and the total amount of processing. Although you can't control the processor speed, you can control how many operations it needs to do by enabling and disabling settings. Most picture settings won't affect the input lag, and monitors rarely have any image processing, which is why the input lag on monitors tends to be lower than on TVs. One of these settings that could add delay is variable refresh rate, but most modern monitors are good enough that the lag doesn't increase much.

Displaying The Image

Once the monitor has processed the image, it's ready to be displayed on the screen. This is the step where the video processor sends the image to the screen. The screen can't change its state instantly, and there's a slight delay from when the image is done processing to when it appears on screen. Our input lag measurements consider when the image first appears on the screen and not the time it takes for the image to fully appear (which has to do with our Response Time measurements). Overall, the time it takes to display the image has a big impact on the total input lag.

Our Tests

In our input lag tests, we use a dedicated photodiode device connected to a PC, which flashes a white square on the screen and records the time it takes for the image to appear in its sensor. We record multiple input lag measurements and take an average of them, and this way, outliers aren't included in the measurement either.

We place the photodiode tool in the middle of the screen so that it reads the flashing white square when it first appears on screen. Since monitors refresh the screen progressively from top to bottom, any new image reaches the center of the screen in the middle of the refresh rate cycle. It means that if a monitor has a refresh rate of 144Hz, the screen refreshes itself 144 times every second, and a new image appears every 6.94 ms. Since we're calculating the input lag at the center, it only takes 3.47 ms for the white square to appear in the middle. Any 144Hz monitor has a minimum input lag of 3.47 ms, so even if we measure an input lag of 4 ms, it's only 0.53 ms higher than the minimum, which is fantastic. Below is a table of the minimum input lag for the common refresh rates on monitors.

| Refresh rate | Time between frames | Minimum input lag |

|---|---|---|

| 60Hz | 16.67 ms | 8.33 ms |

| 120Hz | 8.33 ms | 4.17 ms |

| 144Hz | 6.94 ms | 3.47 ms |

| 165Hz | 6.06 ms | 3.03 ms |

| 240Hz | 4.17 ms | 2.09 ms |

| 360Hz | 2.78 ms | 1.39 ms |

As you can see, there's a bigger difference in minimum input lag between a 60Hz and 120Hz monitor than a 144Hz and 360Hz monitor, even though the difference in the refresh rates are greater. This means that getting a high refresh rate monitor of at least 144Hz, or even 165Hz or 240Hz, is ideal for gaming, but 360Hz doesn't bring much of an advantage in terms of input lag.

Native Resolution At Max Hz

This input lag test represents the lowest lag a monitor can achieve while using its native resolution and its maximum refresh rate, including any optional factory overclock setting. It's the amount of lag best for both general use and most gamers. To get the lowest amount of lag on some monitors, it might be necessary to enable a 'Game' or 'Instant' mode, but most monitors don't require any extra settings.

Native Resolution At 120Hz

More and more people are choosing monitors for Xbox Series X and PS5 gaming. Since these new consoles support 120Hz refresh rates, it's important to know how well the monitor performs in terms of input lag when gaming at 120Hz from these new consoles. However, keep in mind that the consoles and controllers themselves add significant lag, meaning you won't get an instant gaming experience anyways.

Native Resolution At 60Hz

This 60Hz input lag test represents the lowest input lag a monitor can achieve while using its native resolution at a 60Hz refresh rate. It's the amount of lag that's most important for those planning to use their monitor to play console games that are limited to 60 fps.

Black Frame Insertion (BFI)

The black frame insertion input lag is the input lag when the backlight-strobing feature is enabled. This number is important for people that use their monitor's BFI feature to reduce persistence blur and enhance motion clarity. Many new modern monitors don't have an issue with this, but there are still some monitors that have increased input lag with BFI, which is why we measure it.

Additional Information

Input lag with VRR and HDR

On Monitor Test Bench 1.1, we measured the input lag with the variable refresh rate (VRR) feature enabled and again in HDR. We stopped measuring these because they rarely made a difference, and we often encountered issues measuring them too. For most modern monitors, we don't expect the VRR or HDR to add any significant input lag.

Other ways of measuring input lag

There's no one correct way to measure input lag. Our testing requires a specialized tool that not everyone has. The easiest way you can measure input lag is by connecting a computer to two different screens (a base screen and the test screen). You either have to know the input lag of your base screen or use a virtually instant CRT display. Having a laptop connected to the monitor is also another option. You can display a timer on both screens at once (preferably one with milliseconds included), and you take a picture of the screens; this is your monitor's input lag. In this image example, an input lag of 40 ms (1:06:260 – 1:06:220) is indicated.

This is, however, an approximation because your computer doesn't necessarily output both signals at once. It's also not very accurate because it depends on your camera's shutter speed and whether you can capture the correct frame with the time on it. It can give you a good indication, though.

Difference Between Input Lag and Response Time

Some people may confuse the input lag test with the response time. Input lag is the amount of time it takes for the monitor to display the received signal, but the response time is the time it takes for pixels to change from one color to the next. Even though our response time test consists of squares transitioning between different gray slides and we use the same tool, the two aren't the same. The input lag test stops counting the time when the pixels first change color on the screen, and it doesn't wait for the full transition like with our response time measurement.

Learn more about response time

Why is input lag testing not as detailed as TVs?

Our input lag testing for TVs uses the same tool and testing process, but we take many different measurements. We take it with different resolutions to see how upscaling affects the input lag, in and out of Game Mode, with motion interpolation, and with VRR. TVs have more results than monitors because of the way people use their TVs. You often use your TV with different sources at different settings, while on a monitor, you just have one PC connected, and the settings rarely change.

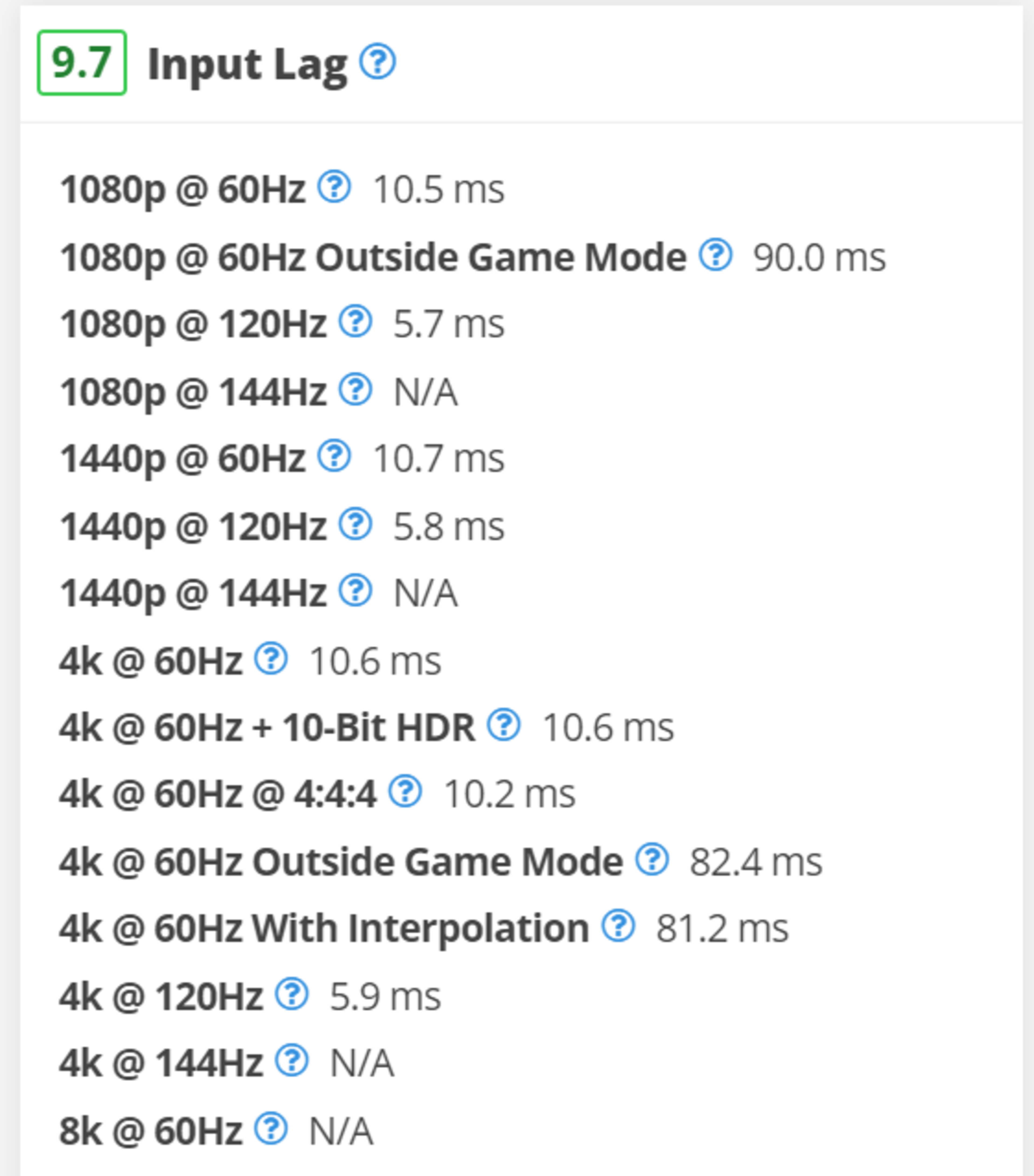

You can see in the screenshot on the right all the different results we test for on a TV. This is from the LG C2 OLED, which has low input lag for a TV, but you can also see how disabling its Game Mode causes an increase in input lag.

How To Get The Best Results

PC monitors tend to have a much lower input lag than the average TV, making input lag less of an issue for most people. More sensitive users can adjust settings on most monitors to reduce it even further. As a general rule, try the following (which is how we set up the displays in our tests):

- Set the monitor to Game or Instant Mode, if it has that, but most likely any picture mode is good enough.

- Disable all picture enhancement settings, including local dimming as we don't test it with local dimming.

- Enable upscaling from your graphics card instead of the monitor.

- Try using wired keyboards and mice, as they have the lowest input lag, and if you prefer something wireless, use the USB receiver instead of Bluetooth.

Conclusion

Input lag is the amount of time it takes for your monitor to display an image or input on the screen from when it receives it. It’s mostly important for competitive gamers, but you can also notice a delay when the input lag is high during regular desktop use. We test to find the lowest amount of lag a monitor can have, as well as how much lag is present when a monitor is displaying lower refresh rates.