Many monitors use LED lights behind their LCD panels to illuminate an image. While it's common for a monitor to maintain a consistent backlight brightness, some monitors flicker their backlight very rapidly. We test for the flicker frequency on monitors using a specialized photodiode tool, and we measure the flicker frequency at different backlight levels to see when/if the flicker starts.

OLED monitors don't use a backlight, as every pixel is individually lit. While you don't experience backlight flicker with OLEDs, they do have a very brief decrease in pixel brightness every time they refresh. However, the decrease in brightness is generally less than when an LED backlight flickers, and the dimming happens line-by-line rather than all at once.

We test flicker on monitors using a specialized photodiode tool, and measure the flicker frequency at different backlight levels to see when/if the flicker starts.

Flicker is different from backlight strobing, as our article on backlight strobing explains.

Test results

Test Methodology Coverage

We've tested image flicker since our first Test Bench 1.0. While we haven't changed how we test image flicker, we did separate Backlight Strobing (BFI) from our image flicker test into its own test starting with Test Bench 1.1.

| Test | 1.0 | 1.1 | 1.2 | 2.0.1 | 2.1 and newer |

|---|---|---|---|---|---|

| Flicker-Free | ✅ | ✅ | ✅ | ✅ | ✅ |

| PWM Dimming Frequency | ✅ | ✅ | ✅ | ✅ | ✅ |

| Backlight Strobing (BFI) | ✅ | ❌ | ❌ | ❌ | ❌ |

When It Matters

While most monitor backlights project light at a consistent level of brightness, some monitors flicker their backlight on and off very rapidly. We call this flickering pulse width modulation (PWM) flicker. Because our vision integrates light over a brief interval, we perceive the backlight as dimmer rather than seeing a series of pulses, provided the flicker happens quickly enough. Most monitors today don't have perceivable flicker. While flicker is often used to lower a monitor's perceived brightness, a few monitors will flicker even at their maximum brightness.

Our Tests

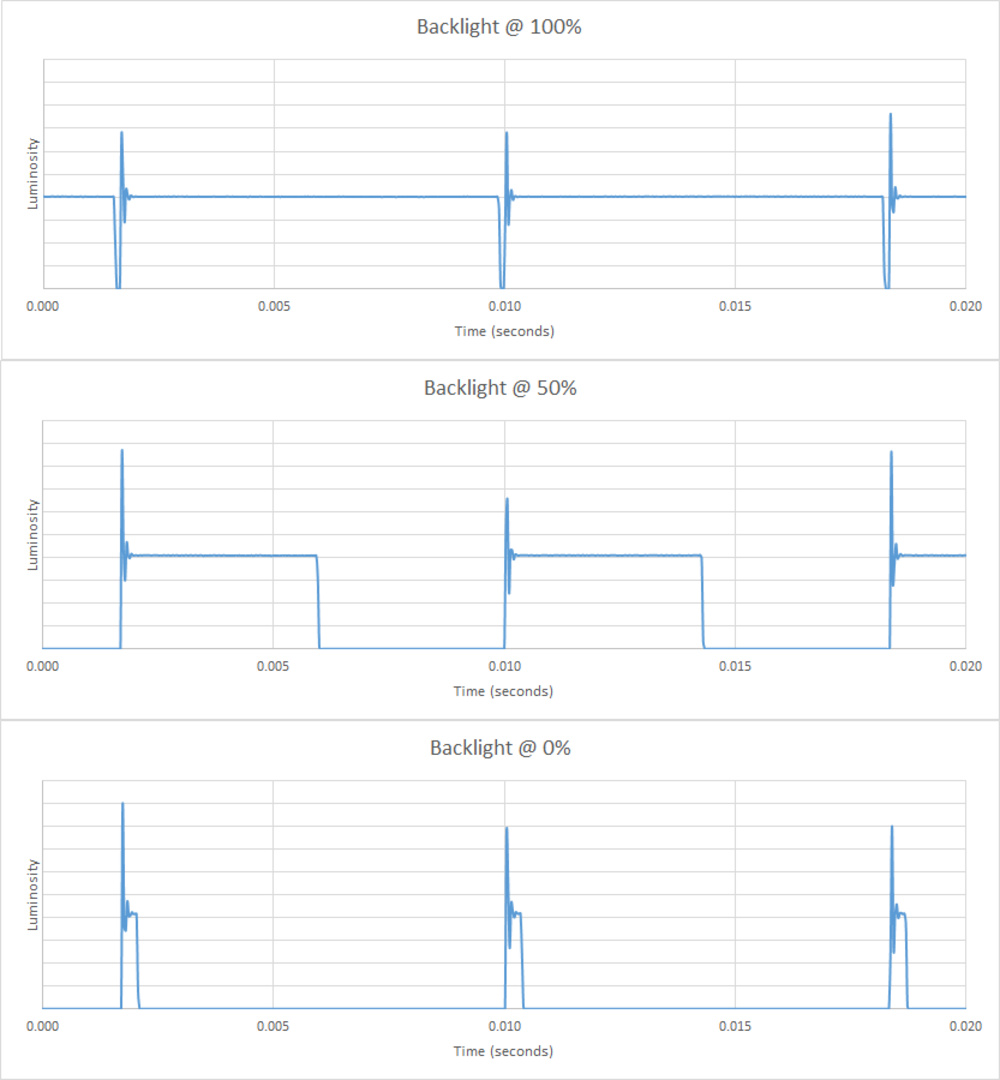

We test for the flicker frequency with the same tool we use to test input lag. We place the photodiode tool directly on the center of a 100% white screen and use software to record and measure the flicker frequency with the backlight at 100%, 50%, and 0% brightness. If we notice anything strange, we also check the flicker with an oscilloscope.

Backlight Picture

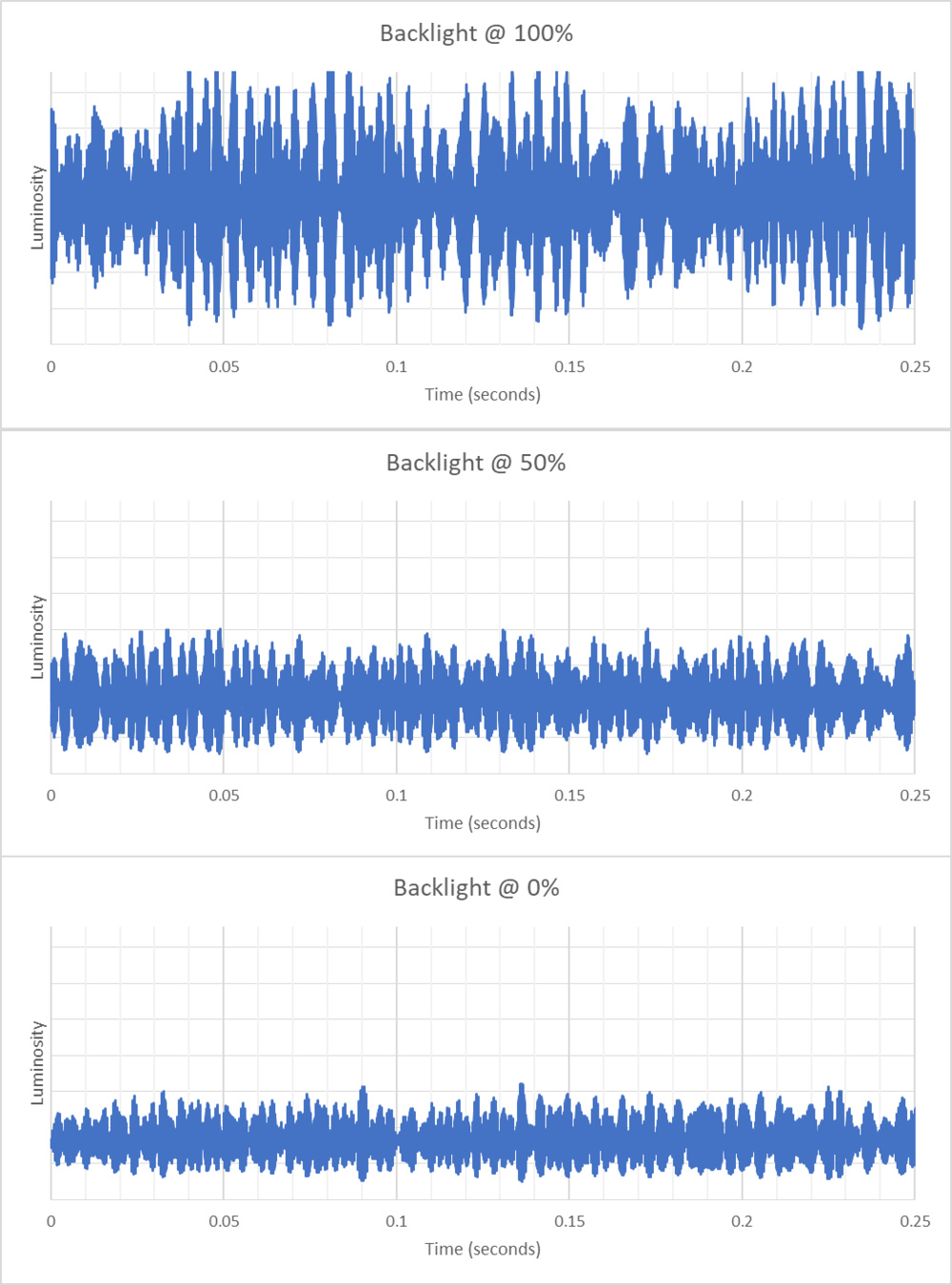

The software plots charts of the backlight intensity at different levels, which we include in every review. As you can see in the charts below, the x-axis is time, and the y-axis is luminosity. The luminosity scale is based on that particular monitor's maximum brightness, so you can't compare brightness fluctuations between different models. Most IPS and VA monitors have a straight line across the entire chart at all brightness levels, meaning there's no change in luminosity, and therefore no flicker. You can see this below with the Dell U2725QE:

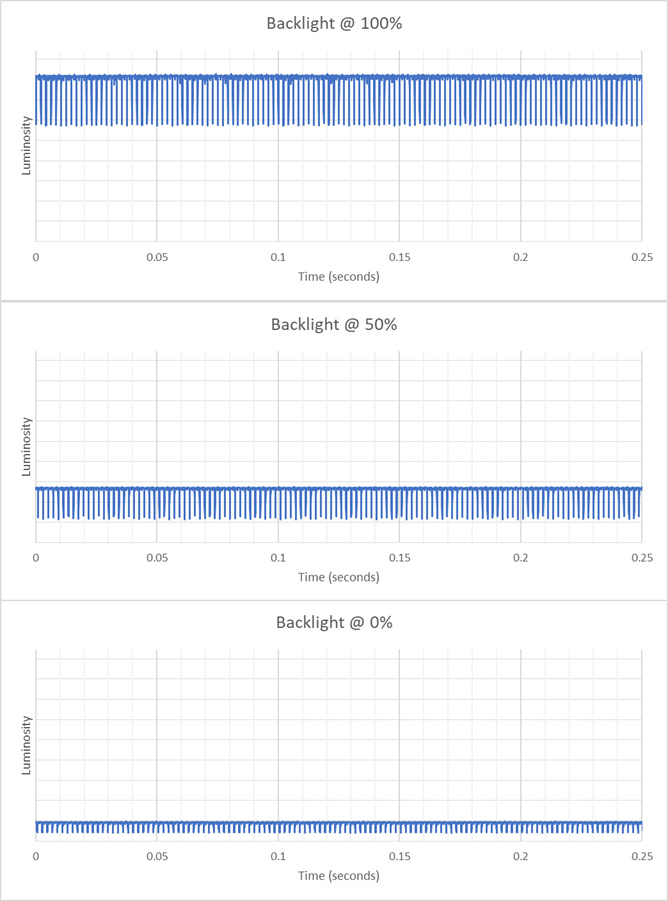

A few VA and IPS displays have PWM dimming and pulse the backlight to decrease brightness. However, most displays available today do so at 1000Hz or greater. You can see this below with the Acer Nitro XV275K P3biipruzx:

Some older displays have PWM dimming, but flicker the backlight below 1000Hz. You can see an example of this with the LG 32UL950-W, which has a 240Hz PWM dimming frequency.

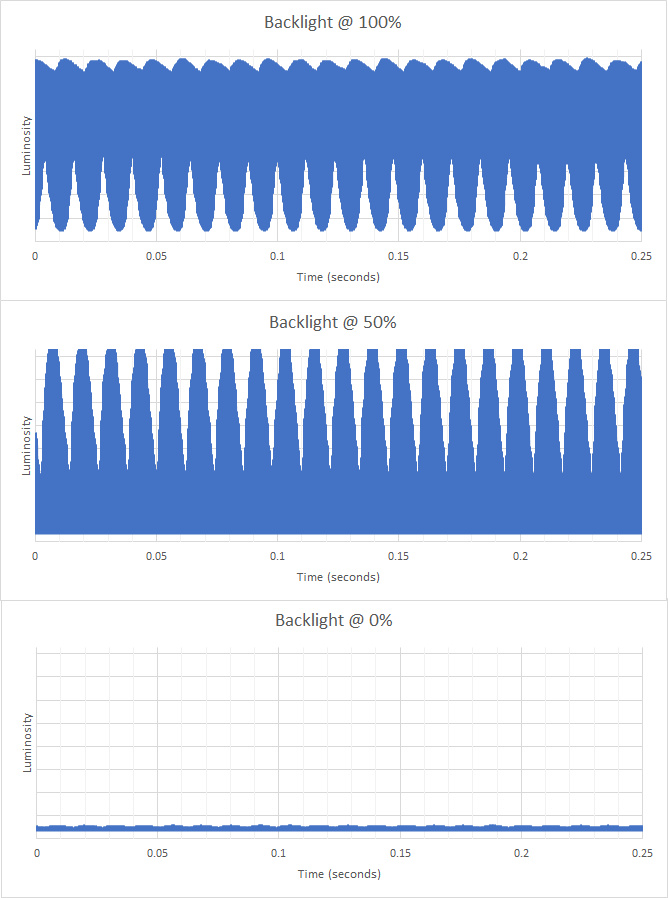

OLED FLicker

OLEDs have a dip in brightness that corresponds to the refresh rate, as you can see with the LG 27GX790A-B below. However, this isn't PWM flicker, as OLEDs don't have backlights. Additionally, this dip in brightness happens line-by-line, unlike PWM flicker, where all pixels are affected at the same time.

Flicker-Free

We consider a monitor to be flicker-free when it has either no flickering at all or it flickers at 1000Hz or faster. Any repeating dip in brightness at less than 1000Hz results in this getting a 'No'.

OLED monitors aren't 'Flicker-Free', as they have a slight dip in brightness that corresponds to their refresh rate. While the extent of this dip varies between monitors, it's generally less than the dip in brightness that occurs with PWM flicker. That said, because this dip exists, we don't call these monitors ' Flicker-Free'.

PWM Dimming Frequency

The PWM Dimming Frequency is simply the frequency at which the backlight flickers.

This result affects the scoring for the Image Flicker test. Although we always give a perfect score of 10 to monitors that don't flicker, a monitor that flickers still gets a perfect score if its flicker frequency is over 1000Hz.

Additional Information

Image Flicker vs Backlight Strobing

Backlight strobing is where a monitor's backlight is intentionally flickered at set intervals to reduce persistence blur. We evaluate this more thoroughly in our Backlight Strobing (BFI) test, though backlight strobing is related to the flicker frequency. With backlight strobing, the pulses are intended, and you can turn them on and off. However, image flicker isn't intended as a goal in itself, and you can't turn it off.

Types of pulse width modulation

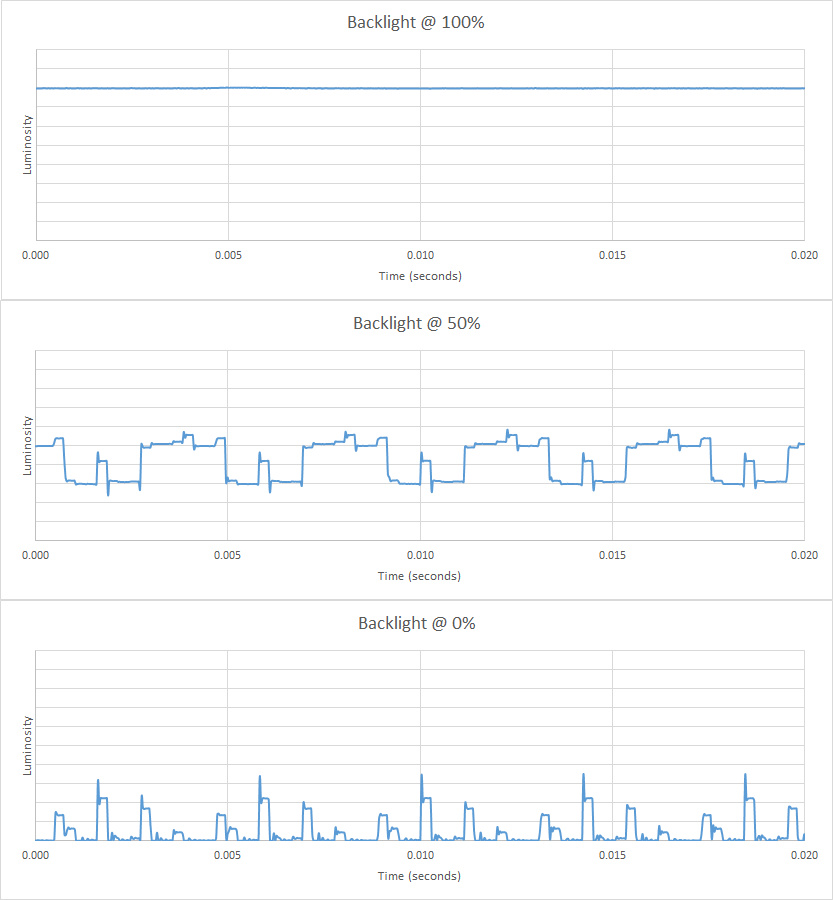

Manufacturers implement different techniques of pulse width modulation, but one of the more common techniques is shortening the duty cycle. The duty cycle refers to the amount of time the pulse is sent, and shortening the duty cycle reduces the amount of time the backlight is on. Below are two examples from TVs that use different types of PWM, but the same techniques are applied to monitors that use PWM. You can see with the LG NANO75 2021 that the backlight flickers at all brightness levels, and the difference between the 100%, 50%, and 0% luminosity is the duty cycle. The backlight stays on for less time as you decrease the brightness.

How To Check For Flicker

- A monitor can introduce image flicker at lower backlight levels, even if it's flicker-free at its max brightness. If you're concerned that your monitor flickers at lower backlight levels, set the brightness setting to its lowest, and wave your hand (or any object) in front of the screen. If you notice your hand is moving like it's in front of a strobe light, then it has flicker. Increase the backlight until you don't see this. If you don't see this effect, then there's no flicker.

Conclusion

LED-backlit monitors have a backlight to light the image on the panel. Sometimes, these monitors will use a technique called pulse width modulation in order to dim the backlight, where it sends short pulses, creating a flicker effect. Nearly all LED-backlit monitors we've tested are flicker-free, but there are a few that flicker. If you're concerned about flicker, be sure to check a monitor's flicker score in our review.